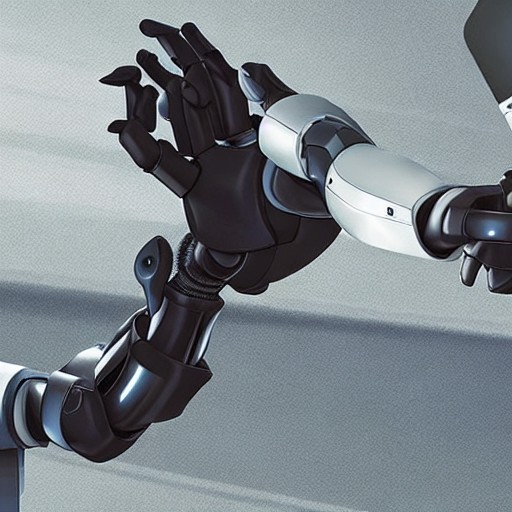

As artificial intelligence (AI) takes over more tasks, websites are scrambling to define its boundaries. This week, Wikipedia drew the line: no AI-generated text for editors. The ban on LLMs updating or rewriting articles has sparked debate among the site's vast community of volunteer contributors.

The controversial policy change was voted in by 403 votes; just two against. It allows AI to suggest minor edits, but with a caveat: human oversight is crucial to ensure accuracy. 'Be careful,' warns the new policy, acknowledging that AI can sometimes stray from its brief and alter meanings without due diligence.

This move towards stricter guidelines for AI integration reflects broader industry concerns about maintaining editorial standards in an era of rapid technological change. Yet, it also raises questions: is there such a thing as too much human touch? And how do we ensure that the wisdom of the crowd remains untainted by algorithmic interference?

For now, Wikipedia remains a bastion of collaborative knowledge where humans still have the final say. However, the future of content creation may well lie in finding a balance between human expertise and AI efficiency.